The BrainScaleS-2 system is our latest generation neuromorphic architecture designed for brain-inspired computing and machine-learning-inspired applications.

It functions as an accelerated system that utilizes spiking neural networks as a common abstraction for modeling computation.

The architecture is uniquely composed of a custom analog accelerator core that enables the accelerated physical emulation of bio-inspired primitives, combined with a tightly coupled embedded digital SIMD processor and a digital event-routing network.

By supporting hybrid plasticity (analog sensors and programmability), this system allows for advanced research into large-scale neuromorphic applications and the efficient emulation of complex nervous system functions.

The BrainScaleS-2 system is our latest generation neuromorphic architecture designed for brain-inspired computing and machine-learning-inspired applications.

It functions as an accelerated system that utilizes spiking neural networks as a common abstraction for modeling computation.

The architecture is uniquely composed of a custom analog accelerator core that enables the accelerated physical emulation of bio-inspired primitives, combined with a tightly coupled embedded digital SIMD processor and a digital event-routing network.

By supporting hybrid plasticity (analog sensors and programmability), this system allows for advanced research into large-scale neuromorphic applications and the efficient emulation of complex nervous system functions.

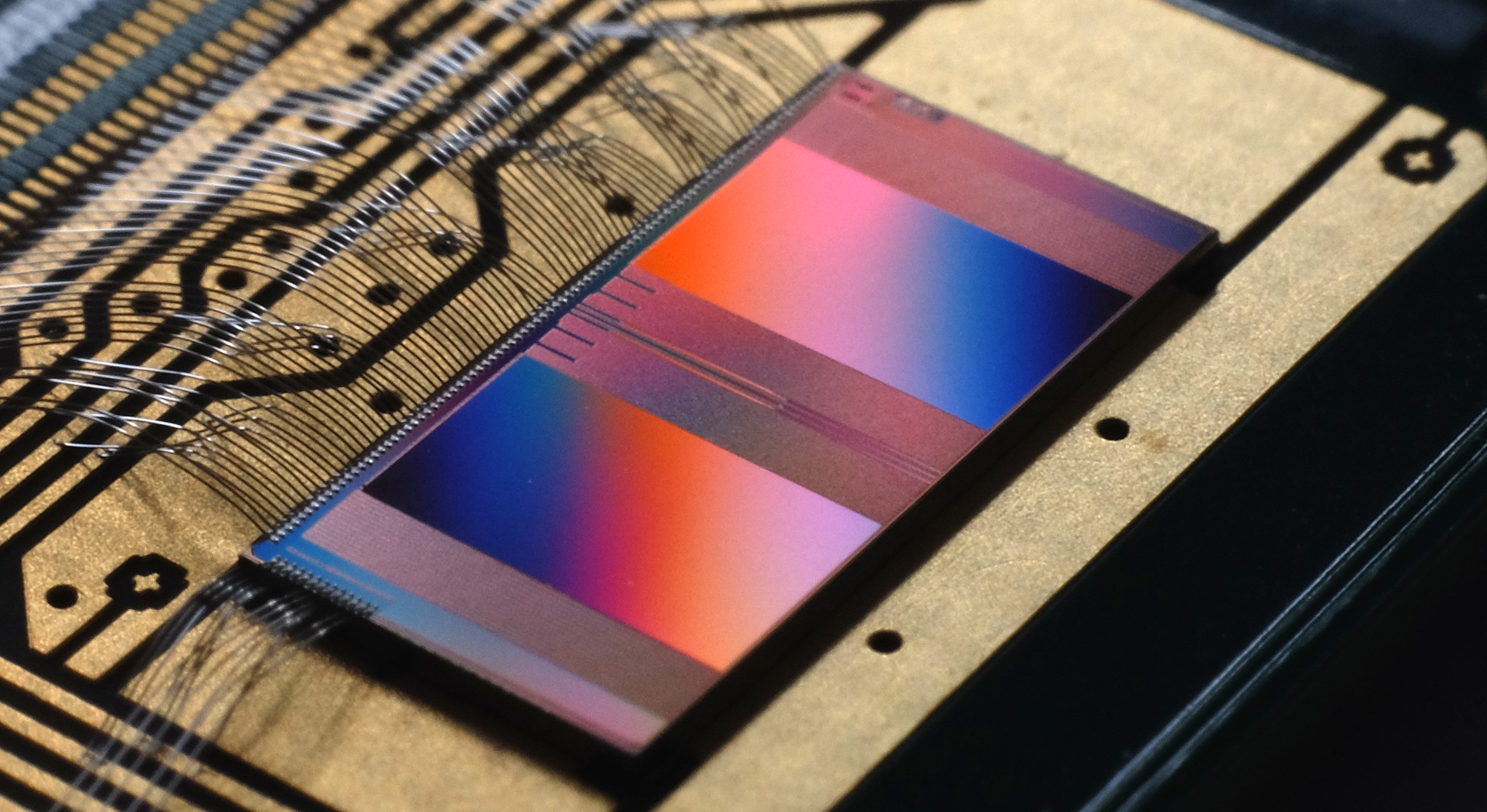

The BrainScaleS-1 system is our neuromorphic architecture developed for wafer-scale emulation of large-scale networks of spiking neurons.

Similar to the earlier Spikey and later BSS-2 architectures, it is built upon a "physical modelling" principle, where analog VLSI circuits are designed to directly mimic the dynamics found in biological nervous systems.

By using the intrinsic properties of electronic components to implement neurons and synapses, the system operates in continuous time.

This approach allows for extreme performance, matching an acceleration factor of 10,000 compared to the biological regime.

Furthermore, its fault-tolerant design enables wafer-scale integration, allowing it to host the largest spiking network emulations involving analog components and individual synapses to date.

The BrainScaleS-1 system is our neuromorphic architecture developed for wafer-scale emulation of large-scale networks of spiking neurons.

Similar to the earlier Spikey and later BSS-2 architectures, it is built upon a "physical modelling" principle, where analog VLSI circuits are designed to directly mimic the dynamics found in biological nervous systems.

By using the intrinsic properties of electronic components to implement neurons and synapses, the system operates in continuous time.

This approach allows for extreme performance, matching an acceleration factor of 10,000 compared to the biological regime.

Furthermore, its fault-tolerant design enables wafer-scale integration, allowing it to host the largest spiking network emulations involving analog components and individual synapses to date.

The BrainScaleS-2 (BSS-2) software ecosystem is designed to bridge the gap between biological modelling and machine-learning-inspired training through several abstraction layers.

For computational neuroscience, the system supports the PyNN API via

The BrainScaleS-2 (BSS-2) software ecosystem is designed to bridge the gap between biological modelling and machine-learning-inspired training through several abstraction layers.

For computational neuroscience, the system supports the PyNN API via pyNN.brainscales2, allowing researchers to define network topologies using populations and projections without needing expert knowledge of the underlying hardware.

A standout feature of BSS-2 is its "programmable" plasticity, enabled by two tightly coupled embedded SIMD processors per chip that have real-time, vectorized access to hardware observables like synaptic correlation sensors in the analog core.

This hybrid architecture allows for the implementation of flexible learning rules and homeostatic processes directly on the substrate.

For gradient-based training, the hxtorch library (and its spiking extension hxtorch.snn) integrates BSS-2 into the PyTorch ecosystem, supporting hardware-in-the-loop (ITL) training through surrogate gradients and auto-differentiation.

The latest development in this stack is jaxsnn, a library built on the JAX framework that focuses on event-driven gradient estimation.

By utilizing native event-based data structures instead of dense time-discrete tensors, jaxsnn provides a more memory-efficient and compute-efficient approach to training, particularly when using algorithms like EventProp that compute gradients based on the precise timing of individual spikes.

The BrainScaleS system is a versatile substrate supporting applications ranging from computational neuroscience, where it emulates multi-compartmental neurons and complex biological plasticity rules, to high-speed neurorobotics featuring closed-loop motor control and autonomous virtual insect navigation. Machine learning-inspired training enables the use of the event-driven substrate as an energy-efficient alternative to conventional deep neural networks.

European Institute for Neuromorphic Computing

Im Neuenheimer Feld 225a

69120 Heidelberg

Germany

Electronic Visions Group – Prof. Dr. Johannes Schemmel

phone: +49 6221 54 9849

fax: +49 6221 54 9839

email: schemmel(at)ziti.uni-heidelberg.de

(All applications only via 'Open Positions')