The Hybrid Multiscale Facility (HMF) combines a neuromorphic computing system composed of custom designed neural circuits in microelectronics with conventional high performance numerical computers.

The neuromorphic system is a physical model of neural microcircuits featuring low energy consumption per neural event, fault tolerance, scalability and the capability to learn.

Networks can be assembled from 1.6 million neurons and 0.4 billion dynamic synapses with user configurable parameters and network architectures.

The merging of the two computational concepts into a hybrid system provides a new experimental platform suited to bridge temporal scales from milliseconds to years and at the same time to study spatial scales from the single cell level to functional brain areas in a single experiment at speeds far exceeding biological real-time.

The Hybrid Multiscale Facility (HMF) combines a neuromorphic computing system composed of custom designed neural circuits in microelectronics with conventional high performance numerical computers.

The neuromorphic system is a physical model of neural microcircuits featuring low energy consumption per neural event, fault tolerance, scalability and the capability to learn.

Networks can be assembled from 1.6 million neurons and 0.4 billion dynamic synapses with user configurable parameters and network architectures.

The merging of the two computational concepts into a hybrid system provides a new experimental platform suited to bridge temporal scales from milliseconds to years and at the same time to study spatial scales from the single cell level to functional brain areas in a single experiment at speeds far exceeding biological real-time.

The Spikey neuromorphic system is a plug-and-play device that emulates spiking neural networks with physical models of neurons and synapses implemented in mixed-signal microelectronics.

The Spikey chip comprises 384 neurons and 98304 synapses.

Network dynamics on Spikey are approximately 10000 times faster than for their biological archetypes.

The Spikey neuromorphic system is a plug-and-play device that emulates spiking neural networks with physical models of neurons and synapses implemented in mixed-signal microelectronics.

The Spikey chip comprises 384 neurons and 98304 synapses.

Network dynamics on Spikey are approximately 10000 times faster than for their biological archetypes.

One of the essential properties of neurons and synapses is that they can change over time, in particular as a result of learning.

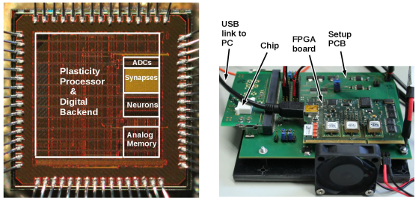

In the newly developed HICANN-DLS chip, we use an on-chip plasticity processing unit (NUX) to control that change.

The prototype chip features an array of 32 x 32 synapses, each of which stores a 6 Bit weight and analog correlation traces that relate pre- and postsynaptic events.

The plasticity processor can use that information to implement various plasticity rules, with STDP being just one example.

For this purpose, it has a vector unit with direct access to the analog and digital state of all synapses.

The analog parameter storage used to configure and calibrate the neurons can also be controlled by the plasticity processor.

One of the essential properties of neurons and synapses is that they can change over time, in particular as a result of learning.

In the newly developed HICANN-DLS chip, we use an on-chip plasticity processing unit (NUX) to control that change.

The prototype chip features an array of 32 x 32 synapses, each of which stores a 6 Bit weight and analog correlation traces that relate pre- and postsynaptic events.

The plasticity processor can use that information to implement various plasticity rules, with STDP being just one example.

For this purpose, it has a vector unit with direct access to the analog and digital state of all synapses.

The analog parameter storage used to configure and calibrate the neurons can also be controlled by the plasticity processor.

While it is quite apparent that any thermodynamic theory of neural networks must fall significantly short of explaining the vast repertoire of complex functionality exhibited by our brains, it remains highly instructive to understand how macroscopic observables can emerge from microscopic interactions between neurons.

While it is quite apparent that any thermodynamic theory of neural networks must fall significantly short of explaining the vast repertoire of complex functionality exhibited by our brains, it remains highly instructive to understand how macroscopic observables can emerge from microscopic interactions between neurons.

Brains are rightfully called "the most complex objects in the known universe", but the main reason why they constitute such interesting objects of study is not their complexity per se, but rather that this complexity gives rise to a yet unparallelled capacity for computation.

Brains are rightfully called "the most complex objects in the known universe", but the main reason why they constitute such interesting objects of study is not their complexity per se, but rather that this complexity gives rise to a yet unparallelled capacity for computation.

Understanding the process of self-organization and learning represents the key for understanding intelligence, be it biological or artificial.

Lying at the core of this line of research, synaptic plasticity is is one of the most important and multi-faceted phenomena in the brain.

As it occurs on a range of very different time scales, it takes on multiple roles, such as mediating homeostasis, facilitating exploration or enabling the formation and consolidation of memories.

The orders of magnitude spanning these temporal scales represent a key motivation for developing accelerated emulation platforms, as they promise insight into processes that are otherwise prohibitive to classical simulation.

Understanding the process of self-organization and learning represents the key for understanding intelligence, be it biological or artificial.

Lying at the core of this line of research, synaptic plasticity is is one of the most important and multi-faceted phenomena in the brain.

As it occurs on a range of very different time scales, it takes on multiple roles, such as mediating homeostasis, facilitating exploration or enabling the formation and consolidation of memories.

The orders of magnitude spanning these temporal scales represent a key motivation for developing accelerated emulation platforms, as they promise insight into processes that are otherwise prohibitive to classical simulation.

Electronic Visions Group – Prof. Dr. Johannes Schemmel

Im Neuenheimer Feld 225a

69120 Heidelberg

Germany

phone: +49 6221 549849

fax: +49 6221 549839

email: schemmel(at)kip.uni-heidelberg.de

How to find us